Should VLMs be Pre-trained with Image Data?

Mar 10, 2025·,,,,,,,,,,·

0 min read

Sedrick Keh

Jean Mercat

Samir Yitzhak Gadre

Kushal Arora

Igor Vasiljevic

Benjamin Burchfiel

Shuran Song

Russ Tedrake

Thomas Kollar

Ludwig Schmidt

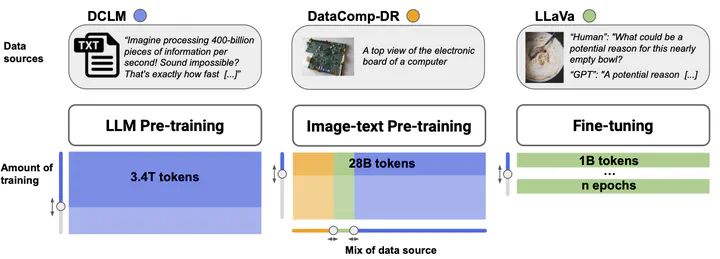

Achal Dave

Three-stage training pipeline for LLMs and VLMs: LLM Pre-training (3.4T tokens from DCLM text data), Image-text Pre-training (28B tokens from DataComp-DR image-text pairs), and Fine-tuning (1B tokens from LLaVa conversational data).

Three-stage training pipeline for LLMs and VLMs: LLM Pre-training (3.4T tokens from DCLM text data), Image-text Pre-training (28B tokens from DataComp-DR image-text pairs), and Fine-tuning (1B tokens from LLaVa conversational data).Abstract

Pre-trained LLMs that are further trained with image data perform well on vision-language tasks. While adding images during a second training phase effectively unlocks this capability, it is unclear how much of a gain or loss this two-step pipeline gives over VLMs which integrate images earlier into the training process. To investigate this, we train models spanning various datasets, scales, image-text ratios, and amount of pre-training done before introducing vision tokens. We then fine-tune these models and evaluate their downstream performance on a suite of vision-language and text-only tasks. We find that pre-training with a mixture of image and text data allows models to perform better on vision-language tasks while maintaining strong performance on text-only evaluations. On an average of 6 diverse tasks, we find that for a 1B model, introducing visual tokens 80% of the way through pre-training results in a 2% average improvement over introducing visual tokens to a fully pre-trained model.

Type

Publication

In ICLR 2025